Comparison of Cloud Run and Lambda to render Blender scenes serverlessly

JR Beaudoin5 min read

In a recent post, I explained how to run Blender render jobs on AWS Lambda functions, using Lambda container images. As a follow-up, today I am looking at the same workload running on Cloud Run, the easiest serverless way to run a container on Google Cloud Platform.

Test protocol

vCPUs and RAM

A quick disclaimer is that if you can choose between GCP and AWS, in this case, AWS seems to be a better choice because your Lambda functions can use up to 6 vCPUs whereas Cloud Run is limited to 4. When rendering Blender scenes, increasing CPU power has a huge impact on rendering times. I included a Lambda function with 6 vCPUs in our benchmark and it’s about 30% faster than running on 4 vCPU-solutions (be it on GCP or AWS).

The topic of memory is interesting. With Lambda, you cannot independently choose the number of vCPUs and amount of RAM. Based on the amount of RAM you pick, the Lambda will be allocated a certain number of vCPUs. If you pick 10GB of RAM (the largest option available on Lambda) you will get 6 vCPUs. 6.8GB of RAM give you 4 vCPUs, so that’s what I went for in order to have the same number of vCPUs on both Cloud Run and Lambda.

With Cloud Run on the other hand, you can independently set vCPU count and RAM amount. The maximum amount of RAM available is 16GB which is significantly more generous than what Lambda offers. Depending on what scene you are willing to render, this can be a crucial point because larger 3D scenes in Blender will require more RAM. So it might happen that you simply cannot render the scene you want on a Lambda with 10GB of RAM. In the use-case that led us to set up this system, I was rendering very small scenes so 10GB were more than enough.

Container image

The container image I am using with Cloud Run is very similar to the one I used on Lambda and described in the previous post. It is also based on the Ubuntu image with Blender that is maintained by the R&D team at the New York Times. The main difference is that I do not install the AWS specific SDK and I run an HTTP server with Gunicorn. The impact of this server on the overall performance of the solution will be minimal since the large majority of the response time is spent rendering the Blender scene.

Region

This recent blog post published by Guillaume Blaquiere shows that not all Google Cloud Platform regions are equal when it comes to Cloud Run performance. I had initially set up our experiment in the us-east4 region and, after reading this, I decided to add a test run in the fastest region. In his article, Guillaume Blaquiere finds out that his code runs 11% faster in the us-west3 region compared to us-east4. I got similar results.

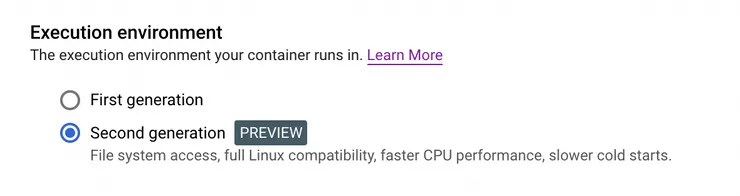

Execution environment

Another variable I took into account is Cloud Run’s execution environment. Google gives us the option to either run in a first or second generation execution environment. The second generation is in preview at the moment.

Test scene

For our test, I render a 500-pixel-wide square image of a test scene provided by Mike Pan and available on Blender’s Demo Files page. I used Blender’s Cycle rendering engine. You can see the output below.

Results

| Test settings | Specs | Region | Average duration of 4 function executions (s) |

|---|---|---|---|

| Cloud Run | 4 vCPUs, 16GB, first gen | us-east4 | 79.8 |

| Cloud Run | 4 vCPUs, 16GB, second gen | us-east4 | 81.4 |

| Cloud Run | 4 vCPUs, 16GB, first gen | us-west3 | 77.7 |

| Cloud Run | 4 vCPUs, 16GB, second gen | us-west3 | 71.5 |

| Lambda | 4 vCPUs, 6.8GB, | us-east-1 | 76.1 |

| Lambda | 6 vCPUs, 10GB, | us-east-1 | 51.5 |

A few observations:

- The results with 4 vCPUs are all pretty similar

- More vCPUs means noticeably faster render times. That’s not breaking news but in our case this really meant Lambda was the way to go.

- We can indeed see that Cloud Run does not perform in the same way in all regions. In this case, it performed 14% slower in us-east4 compared to us-west3.

- The second generation execution environment of Cloud Run seems to be significantly faster than the first in the us-west3 region but slightly slower in the us-east4 region. Given the fact that this new generation is in preview for now, we can expect things to get better in the future but, for now, test

- Using Cloud Run on 4 vCPUs in the us-west3 region is about 6% faster than Lambda with 4 vCPUs in the us-east-1 region.

Next steps

The impact of the region on Cloud Run performance made us wonder if there is a comparable impact on Lambda. So I may update this article with additional information on this.

I would also like to measure the performance impact of using Graviton2 Lambda functions for our task.

It would be interesting to see a serverless solution that lets us use GPUs as this would dramatically speed up rendering jobs. But would the cost of such a solution make it a better solution for our use case? I would have to try!

I will post more article on the topic of serverless Blender in the future. Follow me on Twitter to receive updates!