Your React App Deserves a Proper Seo

Rémi Mondenx8 min read

SEO has become an integral part of a developer’s daily missions. Creating a robust, performant and well-designed web app is great, but useless if you don’t manage to get traffic on it. That’s where SEO gets in the game. In this article, you will understand why it is difficult to have a good SEO when creating a full app using React, and find solutions to overcome these obstacles.

What is SEO and why you should care about it?

Search Engine Optimization is a set of techniques which goal is to optimize the visibility of a website on the web search engines. Basically, when a search engine is looking for the best results according to a user’s search, it looks into a huge database called the index. The latter is filled by bots, the crawlers, which job is to walk around from site to site, analyze them and gather information in the index. The more optimized is your website, the better are the chances that it will appear in the top ranks of the results, which is crucial. Indeed, more than 60% of the traffic goes to the first three results of a web search. Thus, if you want your website to be visited, you should do your best to have a good ranking on search engines.

Why is it more difficult with React?

When creating a web app using React, you will face several issues concerning SEO.

Only one URL

React is implementing the concept of Single Page Application (SPA), so your application will have only one URL. This is absolutely not a problem if you want to create a showcase site. However, it will become one if you want to build a more complex app, with several virtual pages where users can navigate. You can simulate a page change by switching components conditionally to user’s interaction with your app, but it will remain the same page with the same URL. So, crawlers will only be able to read part of the application: the rendered components representing the first virtual page of the application when clicking on the unique URL. If each page had its own URL, crawlers could then be able to read your whole application page by page, and all content would have been seen.

All in Javascript

Speaking about crawlers… Search engines have many issues to render JavaScript (for the few that can actually do so), and React is before all a JavaScript library. A classic React application will be composed of an index.html file with barely any content in its body: a div which will be the entry point for React. Then, the components you created will be injected into this div, as JavaScript. So, if crawlers don’t manage to read the JavaScript, they could think that your whole application consists in a single empty div.

First solution: using React Router

One URL problem

The first problem I pointed out is that with React all your components will be rendered on a single page. With React Router, you will be able to do client-side rendering even deeper by creating a router on the client side.

Let’s see how it works with an example:

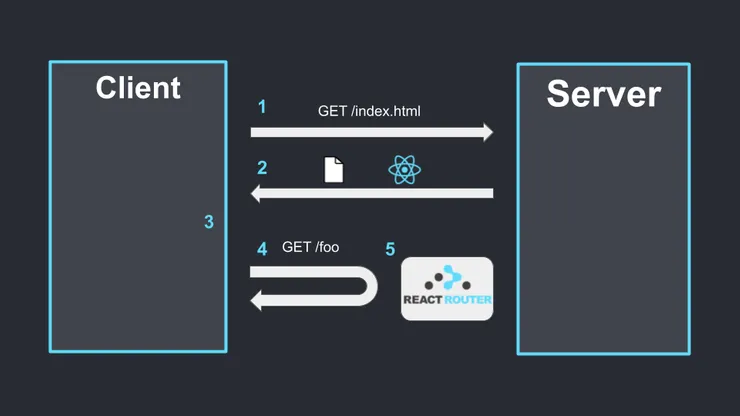

Example of navigation with React Router

Example of navigation with React Router

- When a user wants to go on the website, a request GET /index.html is done to the server.

- The server sends the index.html page which contains scripts to launch React and React Router.

- The application is loaded on the client-side.

- The user clicks on a button to go on a new page /foo.

- React Router intercepts the request before it goes to the server and handles the change of page itself, by updating the rendered components and changing the URL locally.

Thus, you will have several URLs.

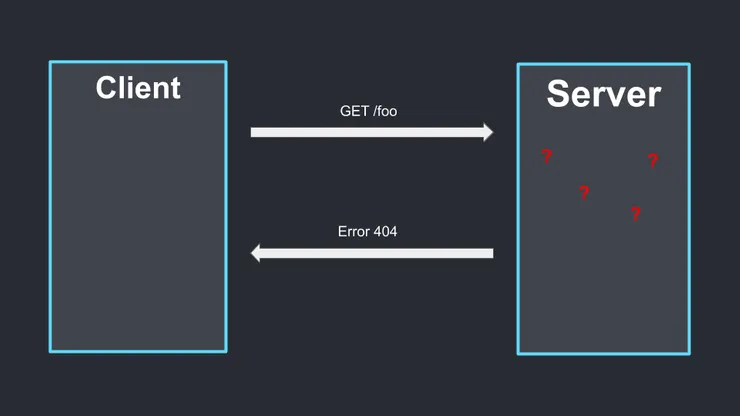

However, there is another issue that appears now, as you are doing client-side routing. Can you see it? To understand well the next problem that we will face, let’s continue the previous example. The user saw the /foo page and wants to share it to his friend. So, he texts him saying: “Look at what I just found: go to my.awesome.page.com/foo”. The second person clicks on the link and arrives on a 404 page.

The second user arrives on a 404 error

The second user arrives on a 404 error

Indeed, your server only knows the index.html page. Only React Router can understand the request GET my.awesome.app.com/foo once it is loaded on the client side.

So, with React Router you will allow your users to navigate in your application locally through internal links, but there is only one entry point to the application, which is your index.html. Every time you will request a page (which is not the index one) to the server, by following a link, refreshing or typing it directly in the URL bar, you will face a 404 error. But no panic, solutions exist!

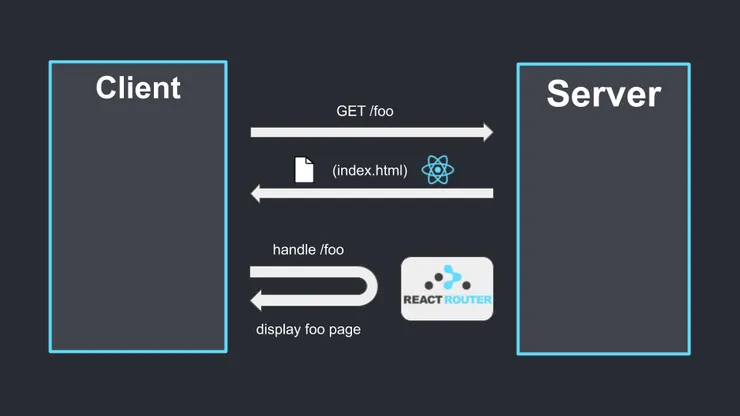

Creating a catch all route

A simple way to handle this problem is to create a catch-all route on your server. The principle is quite simple: make it forwarding all requests to index.html. Once index.html is loaded, React Router will take over and handle the routing. More precisely, it should forward only the requests that the server doesn’t find because actually we don’t want the requests for media, for instance, to be redirected to index.html.

The server sends the index.html and React Router displays the foo page

The server sends the index.html and React Router displays the foo page

For example, if you are on an Apache Server, you could write in your .htaccess this:

RewriteBase /

RewriteRule ^index\.html$ - [L]

RewriteCond %{REQUEST_FILENAME} !-f

RewriteCond %{REQUEST_FILENAME} !-d

RewriteRule . /index.html [L]

React Helmet

Now, you will have an app with several URLs, all working. That’s great. However, there is still a small step to follow for your pages to be fully independent. Indeed, you still have a unique index.html, so the same metadata for all your pages. Metadata is crucial for SEO, and you should have different metadata representing each page. What you can do is to use React Helmet. This package will help you to manage the metadata of each page of your application by adding React components into your code. This is really simple to implement.

Here is an example of how to use Helmets:

import React from "react";

import { Helmet } from "react-helmet";

class Application extends React.Component {

render () {

return (

<div className="application">

<Helmet>

<meta charSet="utf-8" />

<title>My awesome app</title>

<link

rel="canonical"

href="my.awesome.app.com"

/>

</Helmet>

// the rest of your code here

</div>

);

}

};

With a catch-all route along with React Helmet, your app is now composed of several pages, all working and independent!

Problem of Javascript

The first problem is now behind us. However, these different steps don’t change the fact that JavaScript is not well crawled. This problem can be tackled by using prerender.io. This tool will render your code in a browser and save the static HTML. When a crawler will try to visit your website, prerender will take over and send the HTML files, simplifying the understanding of the crawler.

In short, if you are using React Router, creating a catch-all route, using React Helmet and prerender will help you reaching your ranking’s goals!

Second solution: going isomorphic

Going isomorphic is a more complicated solution to set up if your project already exists and uses React Router, but it will improve your site in several ways: performances will be better, along with SEO.

Isomorphism is a term used to define an application which will use both client-side rendering and server-side rendering to take the best parts of each method. With server-side rendering, the application will be computed directly on the server, and a first rendered page will be sent to the client, in order to get the first content fast. This will improve performances as a server is generally more powerful than a mobile phone for instance. Scripts to launch React are then sent. The page is now interactive and the user can navigate, without requesting the server again, also improving performances.

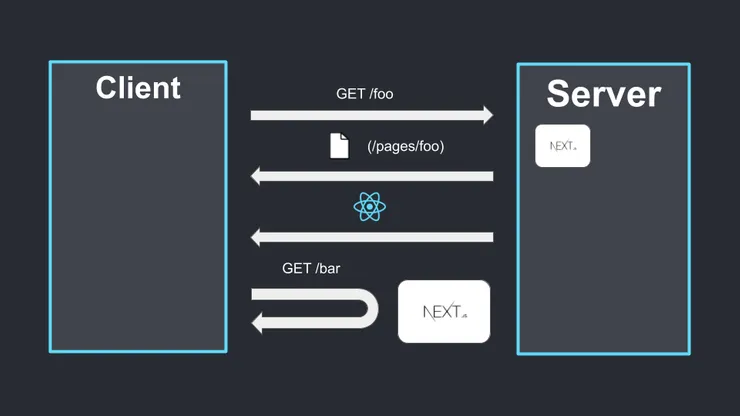

If you want to go isomorphic, you don’t have to imagine a solution, it has already been created for you. You can use for instance Next.js, which is a React library that will provide you everything you need to create an isomorphic application. One requirement is to have a server that can execute Node.js.

The server computes a page, sends it to the user, which then can navigate on client side once React is loaded

The server computes a page, sends it to the user, which then can navigate on client side once React is loaded

This solution has a more important learning curve and is more complicated to set up than the first one, but if you want your app to be really fast and to have a perfect SEO, you really should give a try to Next.js.

Great! Whichever solution you have chosen, you tackled the one URL problem and crawlers will not be in trouble anymore reading your content. Your app is now ready to be in the first rank results. But there is still much to do in this regard and to help you ascend these ranks, I advise you to read this insightful article about how to rank first on Google.

I hope you enjoyed this read,